Netflix leverages AMD Epyc processors to achieve 400 Gbps video data flow per server

Why information technology matters: It's no cloak-and-dagger that AMD's Epyc server CPUs are selling like hotcakes, to the point where Intel is having to heavily discount Xeon fries to stop existing and potential hyperscale customers from going with Team Ruddy. That said, at that place'southward a reason why organizations are increasingly looking at options and in some cases choosing AMD over Intel when it comes to building their data middle infrastructure.

Recently, Netflix senior software engineer Drew Gallatin offered some valuable insights into the company's efforts to optimize the hardware and software architecture that makes it possible to stream enormous amounts of video entertainment to over 209 meg subscribers. The company had been able to squeeze as much as 200 Gb per second from a single server, only at the same time wanted to accept things up a notch.

The results of these efforts were presented at the EuroBSD 2022 conference. Gallatin said that Netflix was able to push content at up to 400 Gb per 2nd using a combination of AMD'south 32-core Epyc 7502p (Rome) CPUs, 256 gigabytes of DDR4-3200 retention, 18 2-terabyte Western Digital SN720 NVMe drives, and 2 PCIe 4.0 x16 Nvidia Mellanox ConnectX-6 Dx network adapters, each capable of accommodating two 100 Gb connections.

To get an idea of the maximum theoretical throughput of this system, in that location are eight retentiveness channels providing a bandwidth of around 150 gigabytes per second, and 128 PCIe iv.0 lanes allowing for upwardly to 250 gigabytes of I/O bandwidth. In networking units, that'due south around ane.2 Tb per 2nd and two Tb per second, respectively. It'due south as well worth noting that this is what Netflix uses to serve its almost pop content.

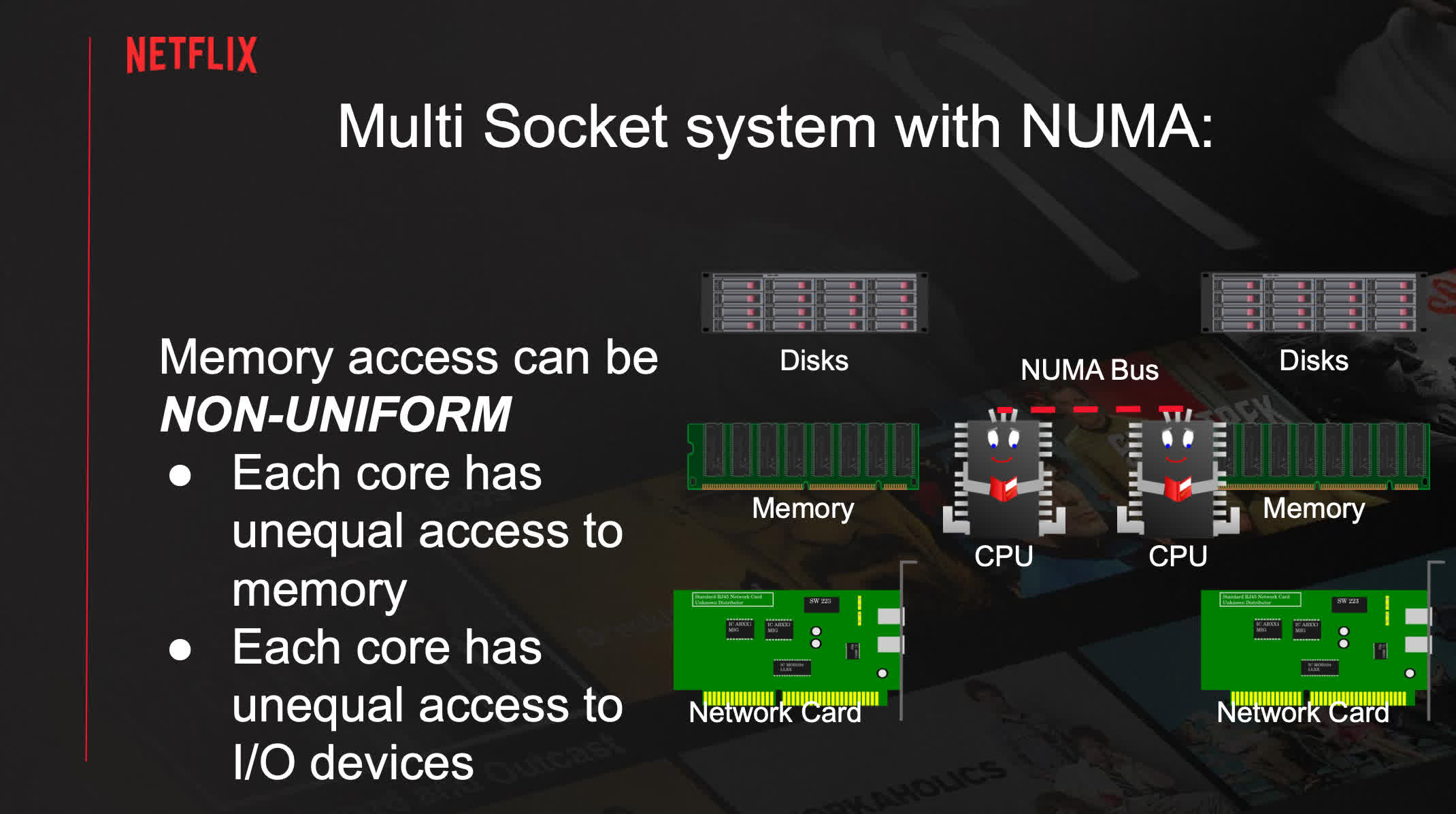

This configuration can commonly serve content at upwardly to 240 Gb per second, mainly due to memory bandwidth limitations. Netflix then tried unlike Non Compatible Memory Architecture (NUMA) configurations, with one NUMA node being capable of 240 Gb per second and four NUMA nodes yielding effectually 280 Gb per 2d.

Notwithstanding, this arroyo comes with a host of problems of its own, such as higher latencies. Ideally, yous have to continue equally much of the majority information off the NUMA Infinity Fabric as possible to prevent congestions and CPU stalls as a outcome of competing with normal memory accesses.

The company also looked at disk siloing and network siloing. This substantially ways trying to do everything on the NUMA node where the content is stored or the NUMA node chosen past the LACP partner. However, this further complicates matters when trying to rest the whole organisation and leads to an underutilized Infinity Fabric.

Gallatin explained that going effectually these limitations was possible past using software optimizations. By offloading the TLS encryption tasks to the two Mellanox adapters, the company increased the total throughput to 380 Gb per 2d (upwards to 400 with boosted tweaks), or 190 Gb per second per network interface card (NIC). With the CPU no longer having to perform any encryption, the overall utilization went downward to 50 percent with four NUMA nodes and 60 pct without NUMA.

Netflix explored configurations based on other platforms, too, including one with Intel'due south Xeon Platinum 8352V (Water ice Lake) CPU, and Ampere's Altra Q80-30 -- a behemoth with 80 Arm Neoverse N1 cores running at up to three GHz. The Xeon testbed was able to reach a modest 230 Gb per second without TLS offloading, and the Altra system achieved 320 Gb per second.

Not content with the 400 Gb per second outcome, the company is already building a new system that should handle 800 Gb per second network connections. Still, some of the necessary components didn't arrive in time to behave whatsoever tests, so nosotros'll hear more about it adjacent year.

Source: https://www.techspot.com/news/91345-netflix-achieves-400-gbps-video-data-flow-server.html

Posted by: greathouseinart1972.blogspot.com

0 Response to "Netflix leverages AMD Epyc processors to achieve 400 Gbps video data flow per server"

Post a Comment